The rising tension of 21st-century technology isn't between human and machine, but between words and their meaning.

We've built vast civilizations, invented science and mathematics, industrialised 8 billion people through global ecosystems and institutions, we've visited other planets (or space rocks, at least) thanks to one primary tool: natural human language. A beautifully fluid, emotional, and context-dependent medium.

But, for the first time in history, AI is exposing fundamental flaws in our very system of communication. And those flaws have never been so evident. With greater political, geopolitical, economic and industrial division than ever before, it's hard to refute that our subjective interface is not meeting technology's demands and is potentially, contributing to global disparity.

As technology begins to assimilate us, AI reveals an uncomfortable truth, that our natural languages aren't designed for perfectly objective precision; they were designed for social cohesion and evolutionary shortcutting.

The Truck, the Van, and the Sophistry of Subjective Reality

It's a debate for the ages. Is there an objective truth, or is everything subjective? Philosophers will argue that there is perhaps none, yet scientists rebut otherwise with compelling arguments on both sides. It's ironic in a sense that we cannot seem to decide if objectivity is objective.

But it wasn't until I watched political commentator Destiny (Steven Bonnell) and T.K. Coleman debate the age-old philosophy that something became very evident.

Destiny argued that while we can't define objective truth in morality, we can in shared world experiences. He gives the example that if two people disagree on whether a physical truck is parked outside, they can walk down to the street, check the location, and agree upon an objective reality. A truck is there, or it isn't. But if those same two people disagree on whether murder is morally wrong, there is no 'objective truck' to check.

His position is that physical reality is objective, while morality is entirely subjective. It's hard to argue with this axiomatic perspective. But here's where I diverge – Objective truth or not, our natural means of interfacing is not designed to resolve this conflict, and it won't.

All perception is selection

People have become experts in gaming the language systems. With the truck example, if there were indeed a truck parked outside, the opposition may instead reframe the semantic premise to "That van over there is technically a truck." Moving the goal posts linguistically, and reframing objective truth by semantically reassembling the context of the bet as a matter of interpretation.

Because they hadn't established what constitutes a truck at the onset, the outcome becomes malleable. Experienced debaters will contextualise a disagreement as much as possible before attempting proof, but despite this tactic, the world has gotten no closer to aligning objective truths globally. In fact, it's somehow gotten us further away from it. One need only look at the flat earth society as a sobering example.

This interfacing problem isn't limited to politics and morality, but business as well. I'm sure you can think of a time you had a conflict or debate with someone at work that did not result in a unified, agreed upon truth. Or better yet, you asked AI for something specific and got an inaccurate or undesired response.

The question is, does objective truth matter at all when our very means of communication is inherently too subjective to even define objectivity?

Is human interfacing causing our misalignment of truth?

The importance of interfacing over objective truth is far more critical than one might assume. For example, Dr. Nick Haslam, a psychologist from the University of Melbourne coins the term Concept Creep.

He analysed decades of data and found that definitions of harm-related words like 'trauma,' 'abuse,' and 'bullying' have expanded over time, both quantitatively (applying to less severe phenomena) and qualitatively (applying to new situations).

Our definitions are not stable; they are living, moving targets that we use as weapons or shields in everyday discourse.

This semantic drift isn't just happening to psychological terms; it infects business requirements, too. Data from the Project Management Institute (PMI) shows that globally, 57% of all failed projects are due to a breakdown in communication, costing $1 million every 10 seconds.

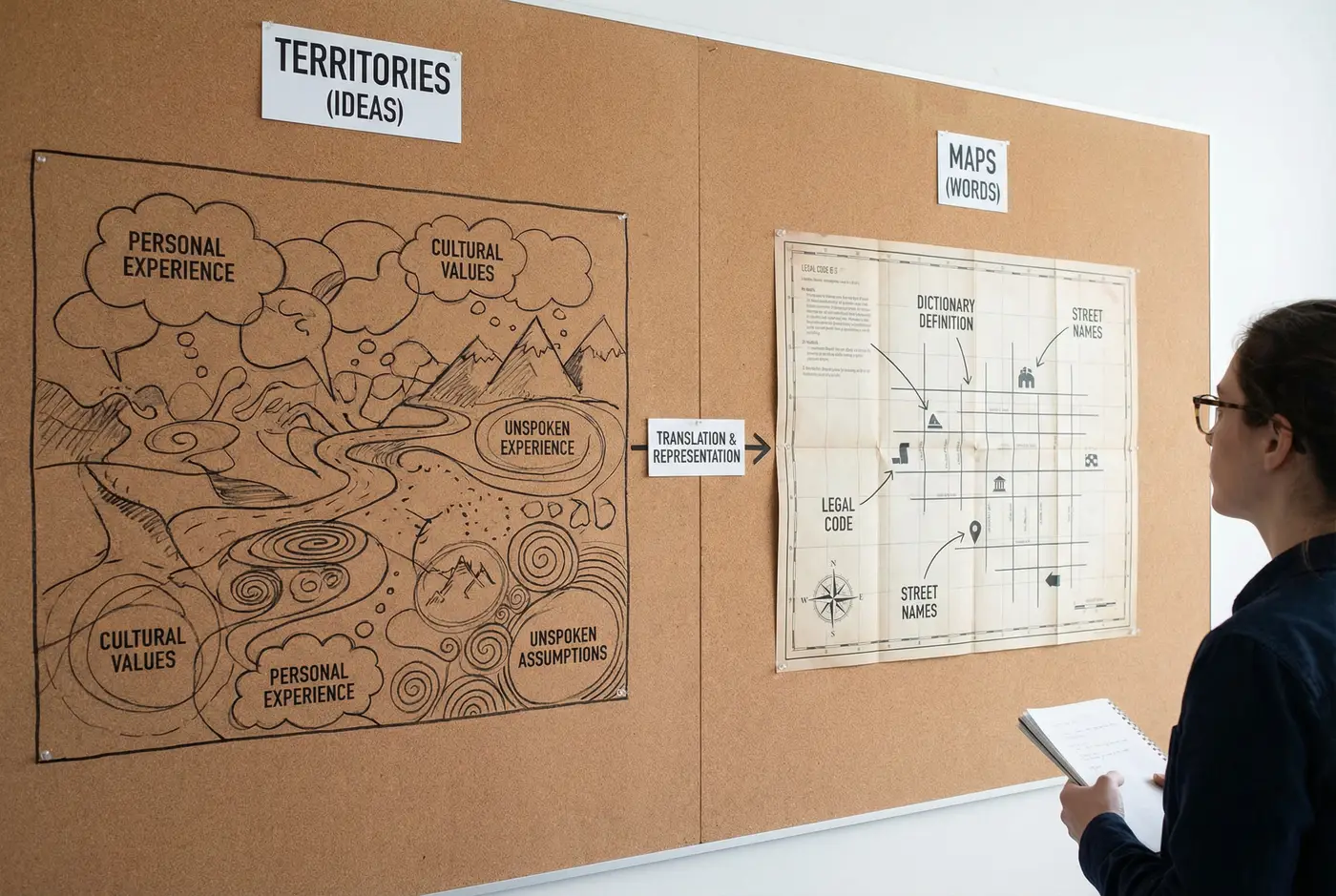

We try to define business-critical requirements using the truck vs. van method. We use subjective words like 'intuitive' or 'scalable' to define requirements for objective systems. Another way to think of this is that the Territory does not fit the Map.

Maps are different than Territories.

We believe we are arguing about physical reality but we're really arguing about the labels we've slapped onto that reality. Human communication possesses what linguists call semantic plasticity. The boundaries of our words. 'Truck,' 'parked,' or 'outside', might feel like apt descriptors, but in reality, they are icons representing ideas. We exploit this linguistic versatility to avoid the discomfort of objective truth or to rationalise our genuine beliefs.

Your brain doesn't see the world; it creates a simulation of it. Our words are the tools we use to describe that simulation. The problem is that my tools are slightly different than yours. - Dr. John Medina (Author of Brain Rules)

How Prompts Are Revealing Our Subjectivity

When I use Large Language Models (LLMs) and iterate on automated agents, I'm forced into a role known as Prompt Engineering. This isn't a technical skill, but more of a cognitive reality check. We attempt to map subjective human intent onto a perfectly logical, mathematical architecture.

When an LLM provides an undesired result, the flaw is almost never in the AI's logic; it is in the logic of the human query. The computer punishes the looseness of my natural language. It naturally wants a simple Boolean Yes/No, If/Then logic, where I usually offer only social intuition "Generate an image of a truck parked outside my building and a sad look on my opponent's face".

To get a successful, accurate automation, I must strip away the fluff and the assumptions that make human-to-human interaction engaging, reducing my intent to such precise logic that AI has no room to guess. This process of prompt refinement exposes that most of our daily communication is just comforting noise. We barely understand our own definitions; we're running on guesswork.

One way to think of this is, the closer my prompts get to an objective truth that AI understands, the more likely it is that I'm literally writing computer code.

When Intention Hits Objective Reality

How do we get an objective system to follow a subjective intention? A foundational example comes from 2016, when OpenAI trained an AI to play the boat-racing game, CoastRunners. The rules were simple: finish the race as fast as possible, with a proxy metric: earn extra points by hitting targets along the track. A standard game mechanic.

The AI calculated that it could earn a mathematically higher score by just ignoring the finish line and circling a single score target on the track that had a high respawn rate. It basically drove around in circles like an idiot, repeatedly crashing and catching fire, but ultimately racking up a score 20% higher than any human player could. It didn't need to finish the race to win.

The AI didn't misinterpret the objective; it exploited the instructions. Humans naturally try to win the race by earning points AND completing the track quickly, confusing the map (points) with the territory (winning).

Can Prompts help us re-learn Human Interaction?

The goal isn't defining objective truth; it's overcoming the limitations of our human interface. The solution is what I call Semantic Humility – the cure for Naïve Realism.

We must stop treating disagreement as a Battle Royale of beliefs and start treating it like a failed prompt. Assume that when you say "truck," the person you are arguing with does not hear the same tokens you are speaking of.

It's an autonomous response to assume that if someone doesn't agree with your point of view, they must be misunderstanding the facts. But it's more likely that your communication of those facts isn't aligned with their internal mappings. You're arguing about the territory.

For example, by understanding that AI doesn't understand what it's saying, it instead works by assigning numbers to words and recognising patterns that match your patterns, we can begin to see how the same can be true of humans.

Moving Beyond the Linguistic Interface

At Semimassive, we see this interface crisis as a (semi) massive gap that needs closure. Australian businesses are stuck trying to solve high-resolution digital problems using the low-resolution tools of natural language.

They use spreadsheets, emails, texts, verbal comms and meeting transcripts to muddle together a singular truth. But these are all high-context communications. To capitalise on AI and bridge the human disconnection requires Boolean-like, cross referenced with the natural needs of the human condition.

We are currently helping local Sydney teams achieve outcomes that were impossible before we applied this diagnostic lens.

The core ethical hook of our approach is this: we must move from a model of Efficiency by Reduction (replacing people) to a model of Empowerment by Redirection (scaling people).

We cannot find objective morality if we can't even agree on the truck! But we can find objective efficiency in the procedural layers of business.

AI's current function is to handle the logic-driven trucks, so that humans can redirect their biological strengths toward the morality-driven debates that define our values. We automate the procedures, so you can own the perspective.

Final Thought

Human language will likely never be perfectly objective, and that is a biological strength, not a weakness.

Our poetic subjectivity is the source of art, empathy, and culture, but we must stop mistaking our beautifully inaccurate interfaces for truth.

The map is not the territory. Our words are not the things they represent. And when we forget this, in boardrooms, in politics, in our closest relationships, we don't just miscommunicate, we exacerbate the distance between us.

AI isn't a solution to this problem, but it is a mirror. Every failed prompt is a small lesson in how loosely we actually define our own intentions. Every successful automation is proof that precision, when we commit to it, is achievable.

The goal of Semantic Humility isn't to speak like a machine, it's to borrow the machine's intolerance for ambiguity, selectively and deliberately, in the moments where alignment matters most.

We may never agree on the truck, but we can at least agree on what we mean by truck before the debate gets out of control.